AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

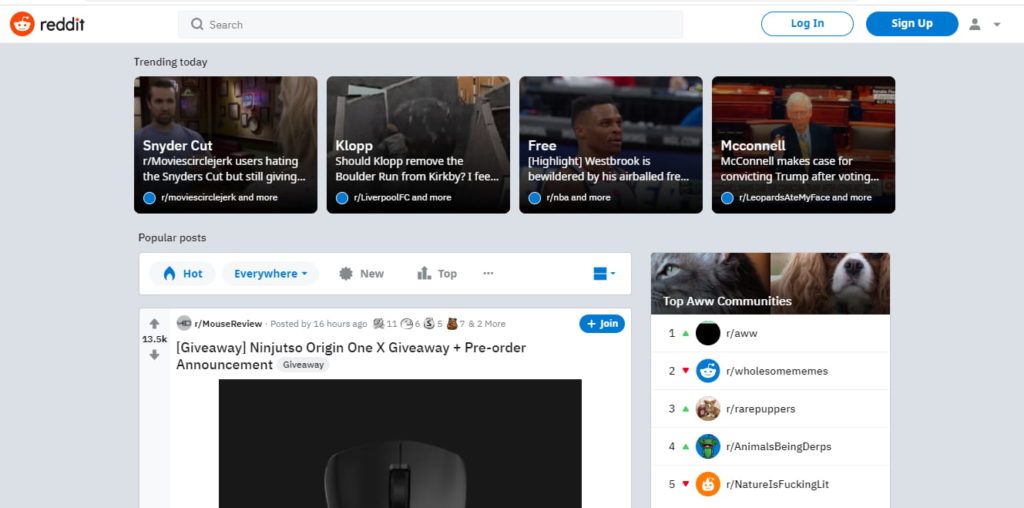

Reddit webscraper11/20/2023

The "township crawler" can pull each item from the queue, and fetch every street, and dump those into a queue. For each state, get all the townships (just 1 page hit) and push each township into a queue. I'd use a series of queues and lambdas, or make it a little recursive. See if you can pull your data using a simple regexp, or run the data through something like "jsdom" or "cheerio" and use XPath or query selectors to pull the data. For each "type" of target page you have, make a rule. After that, it doesn't appear you'll need to scrape through any javascript, meaning you can just fetch the HTML data and pull your data out of it. My approach would probably be to start with a list of links to each state. Find a lightweight way to extract your text like jsdom or cheerio. TL/DR all those links (after the 1st page, at a glance) appear to return the data you need without needing to run javascript. My plan was to use Lambdas and queues as well. I'll be tracking the price of 1000 product across about 12 websites. This caught my eye, as I may be scraping some data as well in the near future. Does anyone have any helpful links to tutorials/resources where people have written a massive web-scraping effort like this on AWS?

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed